Bayesian phylogenetics

Erick Matsen

Review

- What does likelihood mean?

- What is its interpretation in terms of simulation?

Sometimes we want to make confident statements

using trees that

are highly uncertain.

Tracking SARS-CoV-2 transmission (colors)

Tracking SARS-CoV-2 transmission (colors)

What if we only care about transmission from country to country?

Working with random variables \(X\):

We can solve for \(X\) in “equations” like \(f(X) \sim Y\), obtaining expressions such as \(\mathbb P(X \mid Y);\) this is called inference.

We can also average with respect to \(X\):

\[ \int g(X) \, d\mathbb P(X \mid Y) \]

where now we are averaging out with respect to a probability.

Probabilistic approach to prediction

- \(Y\): horizontal distance traveled by a cannonball (random variable)

- \(X\): cannon angle (inferred random variable)

- Problem 1: given observed distribution \(Y\), infer distribution of \(X\).

- Problem 2: find probability that a 10% bigger charge will hit castle.

- Solve \(f(X) \sim Y\) to get \(\mathbb P(X \mid Y)\).

- Integrate \(\int \text{hit}_{10}(X) \, d\mathbb P(X \mid Y)\).

Biological experiments are measurements with uncertainty

Model-based statistical inference ✓

We can solve for \(X\) in

“equations” like \(f(X) \sim Y\),

inferring an unknown distribution for \(X\)

(what can we learn about the angle

of the cannon).

We can push uncertainty through an analysis using integrals like \[ \int_a^b g(X) \, d\mathbb P(X \mid Y). \] (we don’t care what the angle of the cannon is really, we just want to know with what probability the shot is going to hit the castle!)

Bayes theorem

\[ P(X \mid D) = \frac{P(D \mid X) P(X)}{P(D)} \]

\[ P(D) = \sum_{X} P(D \mid X) P(X) \]

What is our height divided by the average elevation?

Now, what is model-based statistical inference on discrete mathematical objects?

Motivation: we would like to decide whether an individual has been superinfected, i.e. infected with a second viral variant in a separate event

Integrate out phylogenetic uncertainty

To decide superinfection, we would like to calculate \[ \int_S g(T) \, d\mathbb P(T \mid Y) \] where \(T\) is now a phylogenetic-tree-valued random variable.

If we can sample from the posterior distribution \(P(T \mid Y)\) of trees given data, then \[ \int_S g(T) \, d\mathbb P(T \mid Y) = \sum_{T \sim P(T \mid Y)} g(T) \]

Tracking SARS-CoV-2 transmission (colors)

Integrating over phylogenetic trees?

Phylogenetic trees have discrete topologies, there is no canonical distance between them, nor a natural total order.

But we still want to do inference and integration in this setting!

Subtree-prune-regraft (rSPR) definition

These trees are then distance 1 apart.

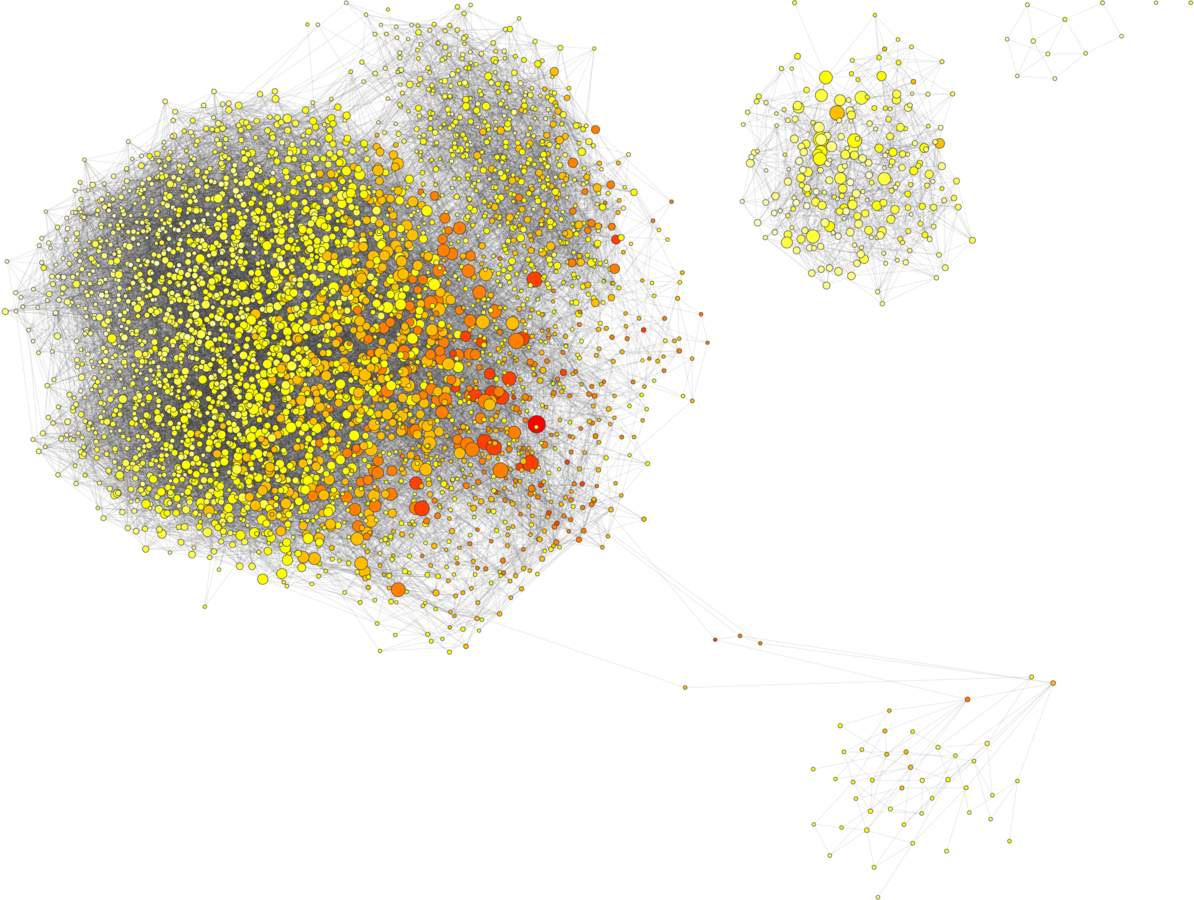

Tree graph connected by rSPR moves

Metropolis-Hastings algorithm

- If you jump to a better tree, accept that move

- If you jump to a worse tree, accept that move with a non-zero probability

- It’s all arranged so that you sample trees in proportion to their posterior probability

Try out MCMC robot!

Markov chain Monte Carlo

Subset to high probability nodes

The top 4096 trees for a data set

The posterior probability of a tree is the probability that the observed tree is correct (given the model and priors)

- Bayesians sample from this posterior

- If you can deal with a prior, it’s the statistically right thing to do

- Sometimes we aren’t actually interested in the tree, so we can integrate it out

- But! Short alignment, 100 taxa = hours

Why is random-walk Markov chain Monte Carlo so slow?

The efficiency of MCMC depends on the fraction of good neighbors.

# good neighbors for 41 sequences

# good neighbors for 41 sequences (!)

Whidden & Matsen IV. (2015). Quantifying MCMC exploration of phylogenetic tree space. Systematic Biology.